Connector hub

Recent articles

Neuroscientist Mac Shine interviews researchers whose work traverses traditional boundaries.

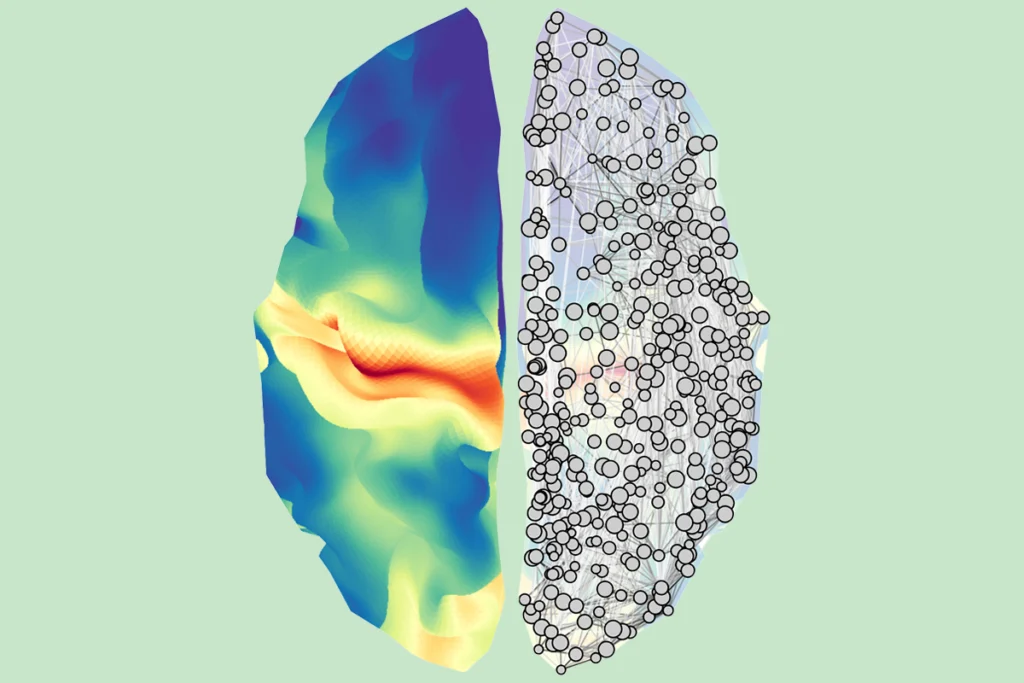

How can we fold cellular-level details into whole-brain neuroimaging networks?

I got answers from Bratislav Misic, who is inventing practical ways to connect the brain’s microscopic features with its macroscopic organization.

How can we fold cellular-level details into whole-brain neuroimaging networks?

I got answers from Bratislav Misic, who is inventing practical ways to connect the brain’s microscopic features with its macroscopic organization.

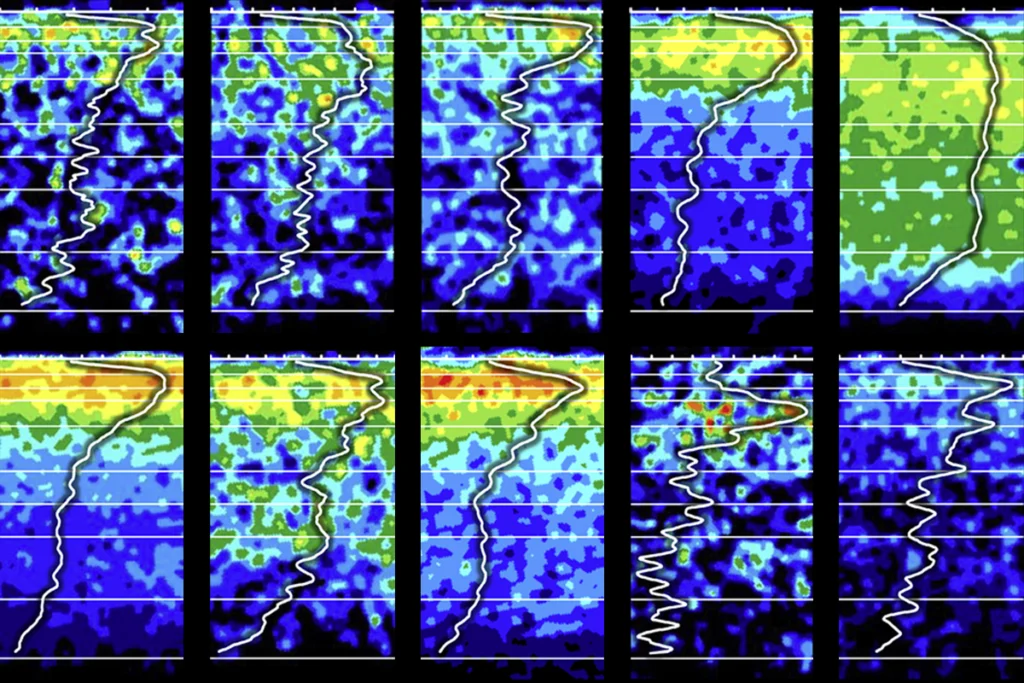

What happens when a histopathologist teams up with computational modelers?

Answers emerge in my chat with Nicola Palomero-Gallagher, a rare example of someone who connects the brain’s microscopic constituents and macroscopic features.

What happens when a histopathologist teams up with computational modelers?

Answers emerge in my chat with Nicola Palomero-Gallagher, a rare example of someone who connects the brain’s microscopic constituents and macroscopic features.

Explore more from The Transmitter

Researchers retract multisensory learning paper after failed replications

Even though one set of experiments did not hold up, the authors stand by the original conclusions of the work and plan to resubmit it as a new paper.

Researchers retract multisensory learning paper after failed replications

Even though one set of experiments did not hold up, the authors stand by the original conclusions of the work and plan to resubmit it as a new paper.

Cortical evolution, ZBTB18, and more

Here is a roundup of autism-related news and research spotted around the web for the week of 30 March.

Cortical evolution, ZBTB18, and more

Here is a roundup of autism-related news and research spotted around the web for the week of 30 March.

Letter asks Congress for nearly $500 million to sustain BRAIN Initiative

The one-time boost would help counter the planned end this year to one of the program’s long-standing funding streams, which will result in a $195 million drop in funding for fiscal year 2027.

Letter asks Congress for nearly $500 million to sustain BRAIN Initiative

The one-time boost would help counter the planned end this year to one of the program’s long-standing funding streams, which will result in a $195 million drop in funding for fiscal year 2027.