Collaboration

Recent articles

How to be a multidisciplinary neuroscientist

Neuroscience subfields are often siloed. Embracing an integrative approach during training can help change that.

How to be a multidisciplinary neuroscientist

Neuroscience subfields are often siloed. Embracing an integrative approach during training can help change that.

Should I work with these people? A guide to collaboration

Kevin Bender offers advice for early-career neuroscientists on how to choose the right collaborations and avoid the bad ones.

Should I work with these people? A guide to collaboration

Kevin Bender offers advice for early-career neuroscientists on how to choose the right collaborations and avoid the bad ones.

Explore more from The Transmitter

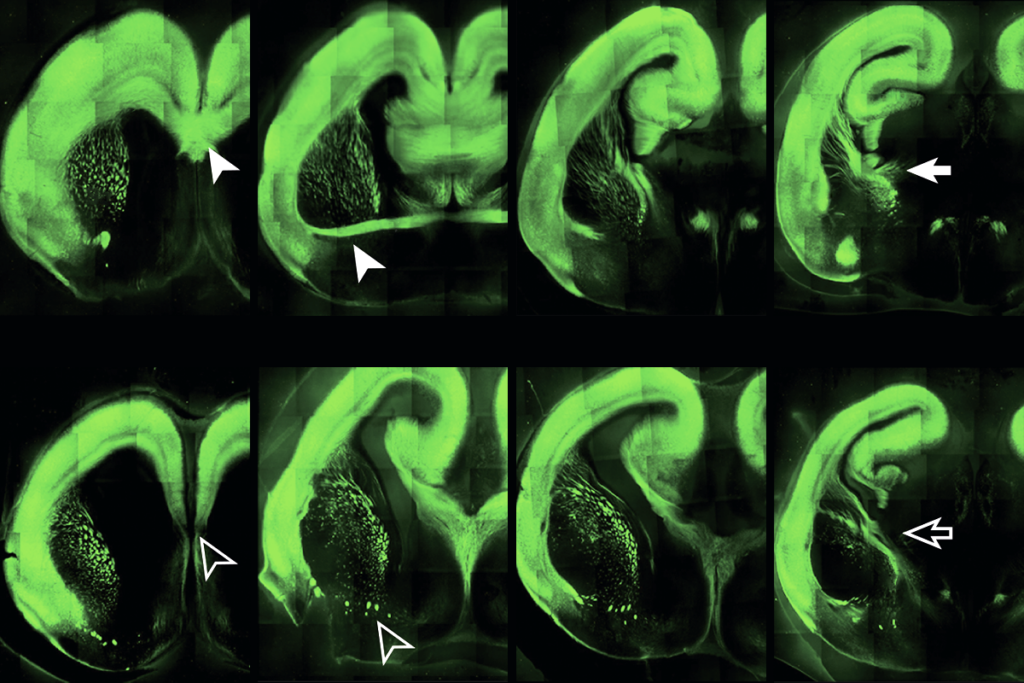

Neuro’s ark: Sounding out the evolution of hearing with geckos

Catherine Carr explains her discovery that geckos retain a vibration-sensing pathway previously thought to be lost when animals moved onto land.

Neuro’s ark: Sounding out the evolution of hearing with geckos

Catherine Carr explains her discovery that geckos retain a vibration-sensing pathway previously thought to be lost when animals moved onto land.

Researchers retract multisensory learning paper after failed replications

Even though one set of experiments did not hold up, the authors stand by the original conclusions of the work and plan to resubmit it as a new paper.

Researchers retract multisensory learning paper after failed replications

Even though one set of experiments did not hold up, the authors stand by the original conclusions of the work and plan to resubmit it as a new paper.

Cortical evolution, ZBTB18, and more

Here is a roundup of autism-related news and research spotted around the web for the week of 30 March.

Cortical evolution, ZBTB18, and more

Here is a roundup of autism-related news and research spotted around the web for the week of 30 March.