Mona AlQazzaz is a community engagement scientific fellow and alliance manager at YCharOS and the Structural Genomics Consortium, an open-science effort to solve protein structures.

Mona AlQazzaz

Community engagement scientific fellow and alliance manager

YCharOS and the Structural Genomics Consortium

From this contributor

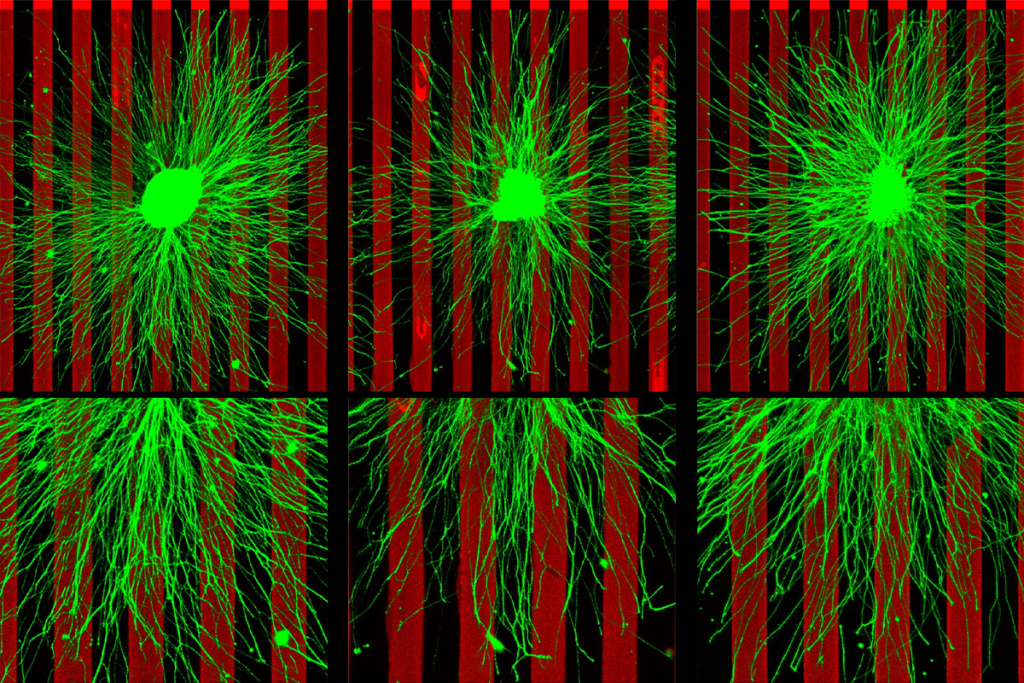

We found a major flaw in a scientific reagent used in thousands of neuroscience experiments — and we’re trying to fix it.

As part of that ambition, we launched a public-private partnership to systematically evaluate antibodies used to study neurological disease, and we plan to make all the data freely available.

Explore more from The Transmitter

The illusion of AI consciousness: Lessons from human unconscious processing

Complex, goal-directed and even emotionally responsive behavior can unfold without awareness, providing a useful lens for interpreting artificial systems.

The illusion of AI consciousness: Lessons from human unconscious processing

Complex, goal-directed and even emotionally responsive behavior can unfold without awareness, providing a useful lens for interpreting artificial systems.

‘Push-pull’ recipe for neural wiring used in multiple brain regions

A versatile pair of proteins steers neurons toward their targets and helps establish the brain’s sensory maps, new studies suggest.

‘Push-pull’ recipe for neural wiring used in multiple brain regions

A versatile pair of proteins steers neurons toward their targets and helps establish the brain’s sensory maps, new studies suggest.

Reward-learning algorithm hardwired into dopamine circuit

The finding bolsters the canonical model of reward prediction error, which has come under scrutiny in recent years.

Reward-learning algorithm hardwired into dopamine circuit

The finding bolsters the canonical model of reward prediction error, which has come under scrutiny in recent years.