Duplicated images and fabricated data plague a paper claiming that a microRNA treatment can decrease autism-like traits in mice, according to research integrity analysts.

Led by corresponding author Xin Yu, the study investigators looked at a commonly used mouse model of autism: mice that have been exposed in utero to valproate. Their findings led them to conclude that microRNA-153 could mitigate some of the changes induced by such exposure. The researchers are associated with China’s Affiliated Hospital of Jining Medical University.

But critics say the study appears to have used images from unrelated studies, and data that overlap with other work. The issues align with patterns seen in work by so-called ‘paper mills,’ companies that sell fraudulent scientific papers to researchers, the analysts say.

“It just seems implausible that a single microRNA is having all of these very convenient actions to alleviate autism symptoms,” says Jennifer Byrne, professor of molecular oncology at the University of Sydney in Australia, who has worked on research integrity issues for several years.

Y

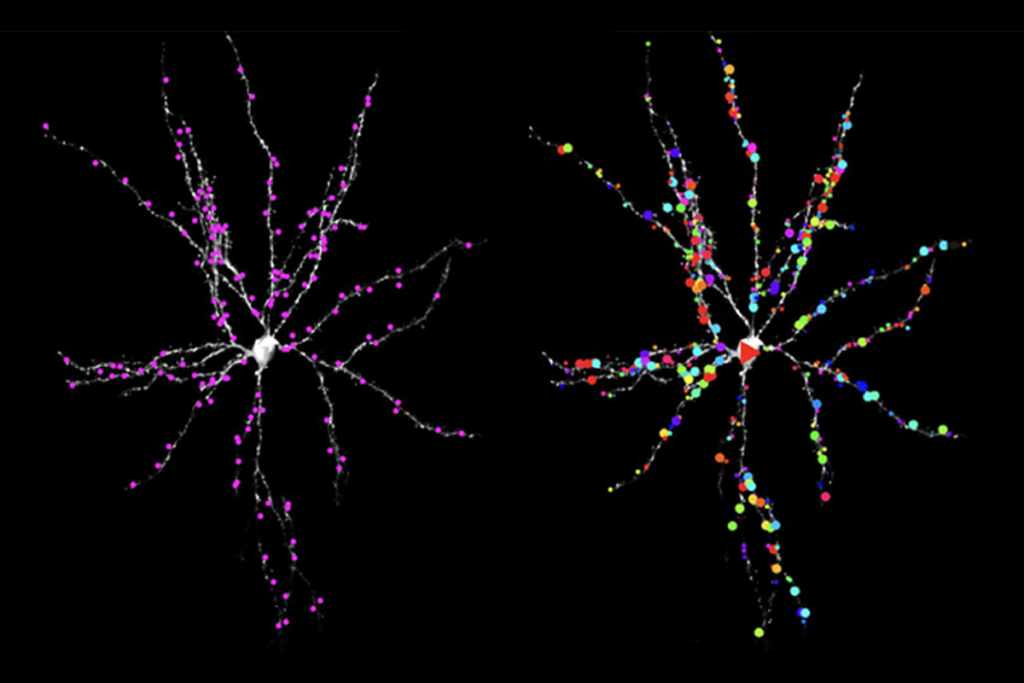

u’s study claims to show that mice exposed prenatally to sodium valproate have impairments in learning and memory, and unusually low levels of brain-derived neurotrophic factor (BDNF). Inhibiting a particular cell signaling pathway leads to an increase in the levels of microRNA-153, which in turn increases BDNF, inhibits cell death and contributes to the proliferation of mouse hippocampal neurons grown in culture dishes, the researchers claim in their study. Yu, who has not responded to multiple requests for comment, and her colleagues concluded that microRNA-153 could be a therapy for autism.The issues first came to light in May in comments posted to the post-publication review site PubPeer by an anonymous reviewer using the pseudonym Hoya camphorifolia.

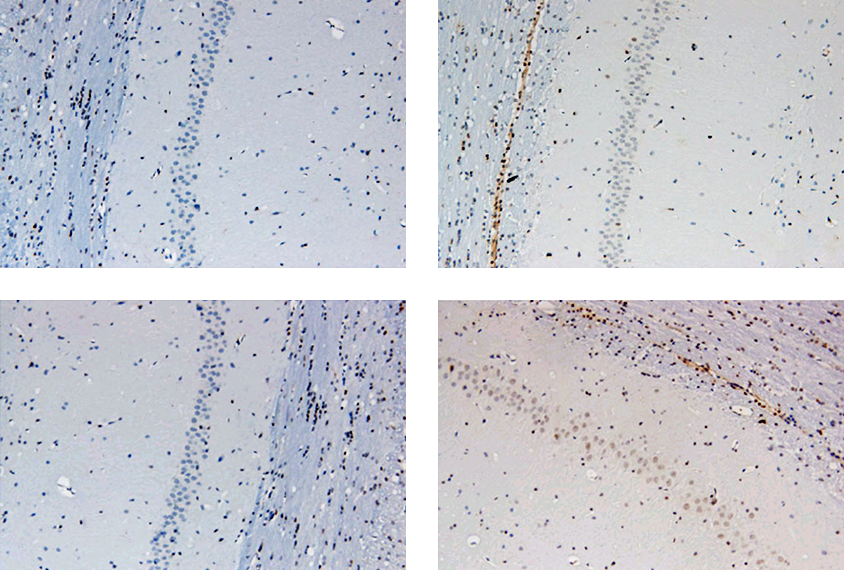

One of Hoya’s comments pointed out that a microscopy image of hippocampal neurons presented in the paper matches one used in at least two now-retracted articles by other researchers. Another noted flow cytometry results with overhanging edges, and a third highlighted the physical similarity of Western blot results — “like blobs of ink, soaking into blotting paper” — to those from an unrelated study, by a different team, that was retracted in March.

Hoya camphorifolia remarked on that retracted paper on PubPeer, too, pointing out that the wording of the acknowledgement and Western blot images were similar to those in papers from paper mills. Articles that seem to be products of those businesses have increasingly cropped up since 2016, according to Elisabeth Bik, a science integrity consultant based in San Francisco, California.

Many have been published by researchers in China, where until 2020 researchers were granted bonuses based on their publication records, particularly in journals indexed in the Science Citation Index, a compilation of the world’s leading scientific journals. The hospital where Yu works has churned out at least 13 confirmed paper-mill papers, according to a 2020 analysis, led by Bik, of more than 600 research articles.

T

he paper was published in Bioscience Reports and has been cited eight times, according to Clarivate’s Web of Science. The journal has a history of retractions: 24 in 2018, 45 in 2019, and 37 in 2020, according to the Retraction Watch Database. (Ivan Oransky, editor-in-chief of Spectrum, is co-founder of Retraction Watch.)Bioscience Reports is aware of the recent comments on the 2019 paper, says Zara Manwaring, the journal’s managing editor. She notes that Hoya camphorifolia is “an incredibly vigilant identifier of image and data issues,” and the criticisms seem well supported. But before the journal reports the paper to its editorial board members, it plans to further investigate the study and reach out to the authors for supporting information, Manwaring says.

A new editorial board, led by editor-in-chief Weiping Han, took over the journal in June 2020. The board has introduced more stringent requirements for authors hoping to submit their manuscripts to Bioscience Reports, according to Manwaring. Researchers must list an institutional email to show their affiliation and are required to submit raw numbers for certain datasets, such as protein and nucleic acid sequences. In the two years since, there have been 15 retractions.

T

he issues raised about this autism study are part of a larger pattern in scientific research, says Nikolaos Mellios, assistant professor of neuroscience at the University of New Mexico in Albuquerque. He estimates that about 20 percent of the papers he reviews have incorrect or false data. “I try to reject as many papers that I can prove it happens,” Mellios says. “But obviously there needs to be systemic change in the peer review process.”For scientists struggling to replicate experiments based on false data, it is easy to get discouraged and think “‘Oh, gee, I’m just not good at this … I’m not cut out for this kind of research,’” Byrne says. “The immediate impact is going to be on people’s careers, just wasted time. Wasted effort. Lost productivity.”

Longer term, the pattern of falsification of data can translate into lost opportunities for people in search of treatments, too. “You’re making people put money on something, and effort, and you’re delaying access for a potential treatment to patients,” Mellios says.

Identifying papers that potentially contain fabricated data requires thinking holistically about the authors’ argument, Byrne says. “I really look at the data and think, ‘Are these data really plausible?’”

Plus, thinking critically about how authors claim to have used a scientific technique can tip readers (and reviewers) off that something in the research doesn’t add up. For example, Yu’s team used flow cytometry, a technique that quantifies the number of cells in each phase of the cell division cycle. To make those cell cycle counts on neurons from mouse hippocampal tissue, the authors would have needed to coax those neurons to divide, something that mature neurons don’t do, Hoya camphorifolia points out in their final comment about Yu’s paper, posted earlier this month.

“Could the authors explain how they persuaded the neurons of adult mice to re-enter the cell cycle and multiply in cell culture?” Hoya camphorifolia wrote. “This would earn them a Nobel Prize.”