Sensory aspects of speech linked to language issues in autism

Language problems in children with autism may be partially rooted in an inability to integrate sight and sound when other people talk.

Children with autism pay just as much attention to speech that doesn’t match lip movements as to speech in which sight and sound are coordinated, according to a new study1. Typical children prefer speech in which the sensory cues are in sync.

Some people with autism have trouble learning to speak and understand words. Some people with the condition have minimal verbal skills or don’t speak at all. The new work suggests that these problems may be partially rooted in an inability to integrate sight and sound when other people talk, and inattention to these cues.

“There are underlying mechanisms that bring about these sets of skills that then translate into language learning,” says lead researcher Giulia Righi, assistant professor of psychiatry and human behavior at Brown University in Providence, Rhode Island. “We really need to understand from a mechanistic view how these abilities come about.”

Regardless of diagnostic status, children who pay more attention to synchronous than asynchronous speech score better on a test of language ability, the researchers found.

“This study is one of many studies that will need to be done to further peel back our understanding of language development in kids with autism,” says Rebecca Landa, director of the Center for Autism and Related Disorders at the Kennedy Krieger Institute in Baltimore, who was not involved in the work.

Clashing cues:

The researchers gave a test called the Preschool Language Scales to 45 children with autism who were 5 years old on average, and to 32 typical children (age 3 on average). The test measures early language and literacy skills, such as vocabulary, sentence structure and ability to recognize letters.

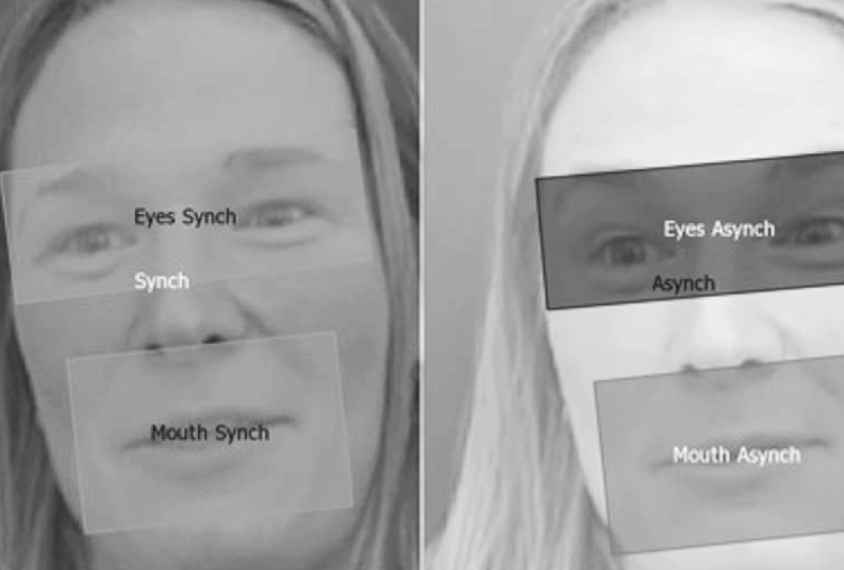

Righi and her colleagues used eye-tracking technology to monitor the children’s gaze as they watched videos of a woman speaking.

Each child viewed pairs of videos on a screen. One pair featured identical videos of the woman speaking in an animated voice, with the sound and picture synchronized. Another pair featured the same woman speaking, but the sound was delayed by 0.3 seconds in one of the two videos. The children also viewed synchronous speech paired with delays of 0.6 or 1.0 seconds.

The children in the autism group were equally likely to look at the asynchronous and the synchronous videos, regardless of the length of the sound delay. By contrast, the typical children preferred the synchronous video to those with a 0.6-second or 1-second sound delay. They showed no preference in the pairing that included the 0.3-second delay.

The autism group spent less time than the typical children looking at the screen overall, which suggests difficulty with attention to these sensory cues. The findings appeared 13 January in Autism Research.

The children with autism also were less likely to look at the speaker’s mouth than the typical children were, suggesting an aversion to looking at the mouth region of faces, says Elena Tenenbaum, a postdoctoral fellow in Stephen Sheinkopf’s lab at Brown University.

Attention to the mouth may help typical children pick out the video with the synched sound.

Language links:

Across both groups, older children and those who were more likely to watch the synchronous video scored higher on the language scale. And children who looked at the woman’s mouth or eyes also had higher language scores, suggesting that reliance on sensory synchrony is related to language development.

“One possible explanation for that is that attention to the mouth and eyes is facilitating their language learning,” Tenenbaum says. “But there are obviously alternative possibilities.”

Blind and deaf children can learn language, so there are likely to be several other mechanisms involved, says Helen Tager-Flusberg, director of the Center for Autism Research Excellence at Boston University, who was not involved in the study. “We know that’s not a critical requirement.”

Researchers should next study whether young toddlers who pay close attention to synchronous sensory cues acquire language skills faster than those who don’t, Landa says. If they do, the result would lend credence to the idea that attention to synchrony influences early language development.

The researchers plan to explore whether physiological changes, such as a quickening heartbeat, coincide with viewing patterns in children with autism. Such changes could hint at whether children with autism have a gut-level aversion to looking at faces.

References:

- Righi G. et al. Autism Res. Epub ahead of print (2018) PubMed

Recommended reading

Okur-Chung neurodevelopmental syndrome; excess CSF; autistic girls

New catalog charts familial ties from autism to 90 other conditions

Explore more from The Transmitter

Karen Adolph explains how we develop our ability to move through the world

Microglia’s pruning function called into question