A new eye-tracking program for VR headsets uses images of real-world environments to capture nuanced aspects of social attention in autistic people, according to unpublished research.

Researchers presented the findings virtually on Tuesday at the 2021 International Society for Autism Research annual meeting. (Links to abstracts may work only for registered conference attendees.)

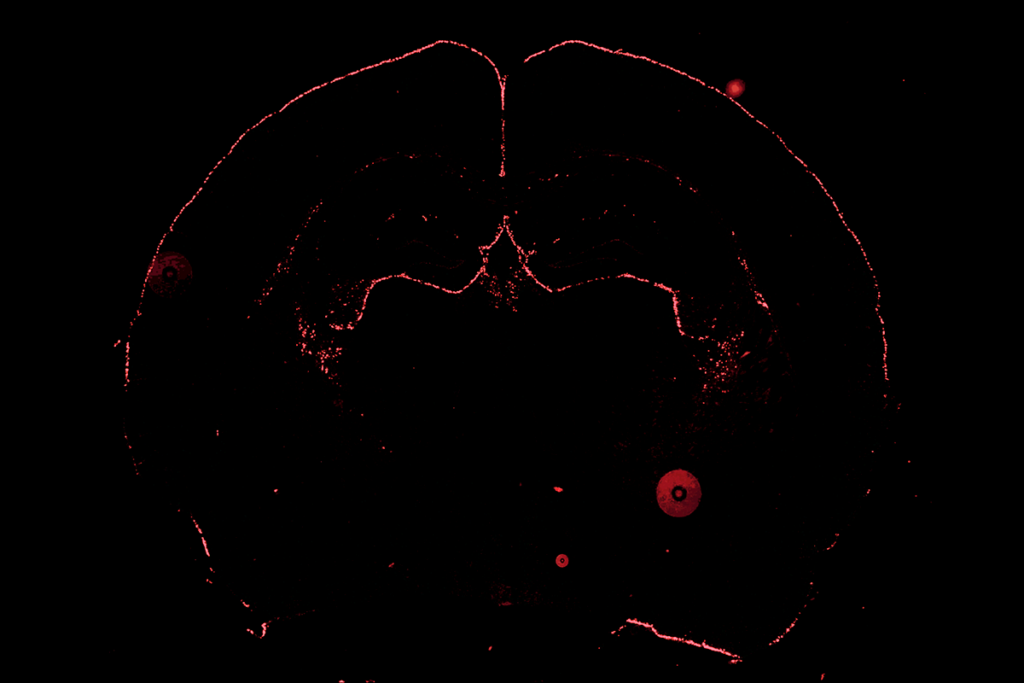

Autistic people generally look less at other people’s faces than non-autistic people do, a tendency that shows up as early as infancy, previous eye-tracking studies have shown. Such studies usually use eye-tracking devices set up in a laboratory to determine what a person is looking at in pictures or videos on a computer screen. Participants must keep their head fixed in place, and the system yields only binary data: whether the participant is looking at a face or an object.

The new technique enables researchers to evaluate how a person processes several types of social information in images or videos all at once, says study presenter A.J. Haskins, a graduate student in Caroline Robertson’s lab at Dartmouth College in Hanover, New Hampshire.

The system also enables viewers to freely move their heads, actively explore a scene and pay more attention to meaningful stimuli than viewers who have their heads fixed in place are able to, according to a 2020 study from Haskins and her colleagues.

Look around:

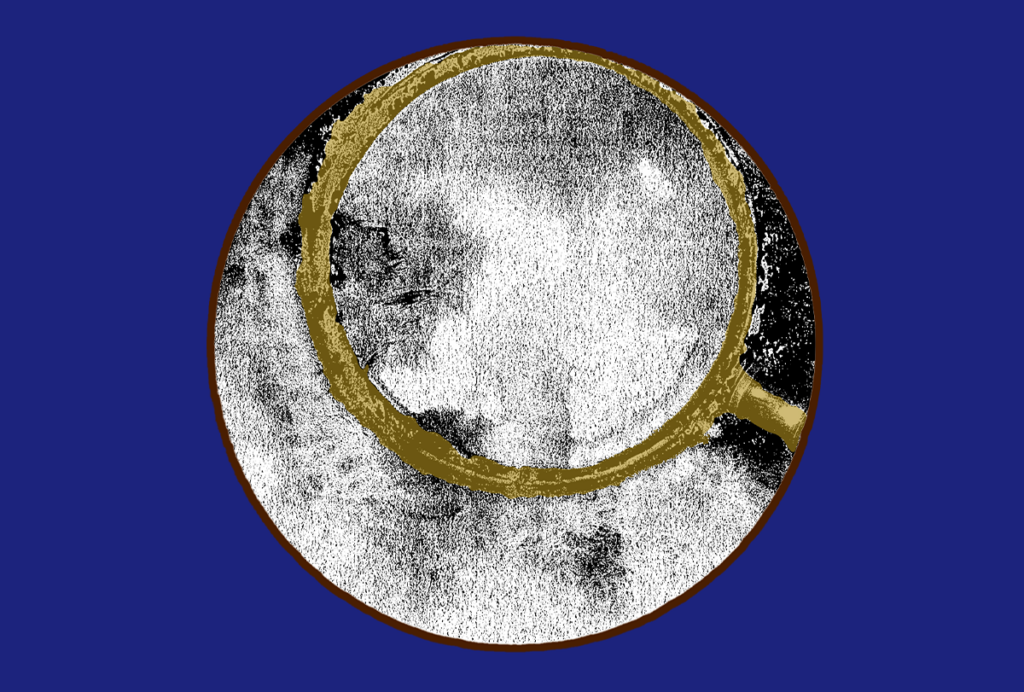

Haskins and her colleagues asked online MTurk participants to view photos including objects and people, such as a scene of people standing on a sidewalk. The researchers broke each image into smaller sections and asked five participants to rate each section’s relative social importance in the scene.

For example, one image shows three faces. The participants rated the face of a person using a phone as having more social importance than that of a person holding a camera, and that face as more socially important than a mural showing a face.

“Not all of those faces should carry the same amount of social information despite all belonging to the same class of stimulus,” Haskins says.

Though preliminary, tests involving 19 autistic participants and 21 non-autistic participants showed that both groups preferentially paid attention to items in the images with the greatest social importance.

Imposing gradients of social importance on specific regions of images should enable eye-tracking studies to better capture naturalistic attention, Haskins says. The ultimate aims, she says, are to improve researchers’ ability to group autistic people according to subtypes and to generate insights into how real-world environments could be adapted to meet people’s needs.

Read more reports from the 2021 International Society for Autism Research annual meeting.