Alycia Halladay is chief science officer of the Autism Science Foundation.

Alycia Halladay

Chief science officer

Autism Science Foundation

From this contributor

New program offers $35K grants to study ‘profound autism’

People who have ‘profound autism’ — those with severe intellectual disability, limited communication abilities or both — tend to be excluded from research. The Autism Science Foundation seeks to change that.

New program offers $35K grants to study ‘profound autism’

Questions for Amaral, Halladay: Boosting brainpower

A new network of brain banks aims to collect and disburse tissue donations to U.S. autism researchers.

Questions for Amaral, Halladay: Boosting brainpower

Explore more from The Transmitter

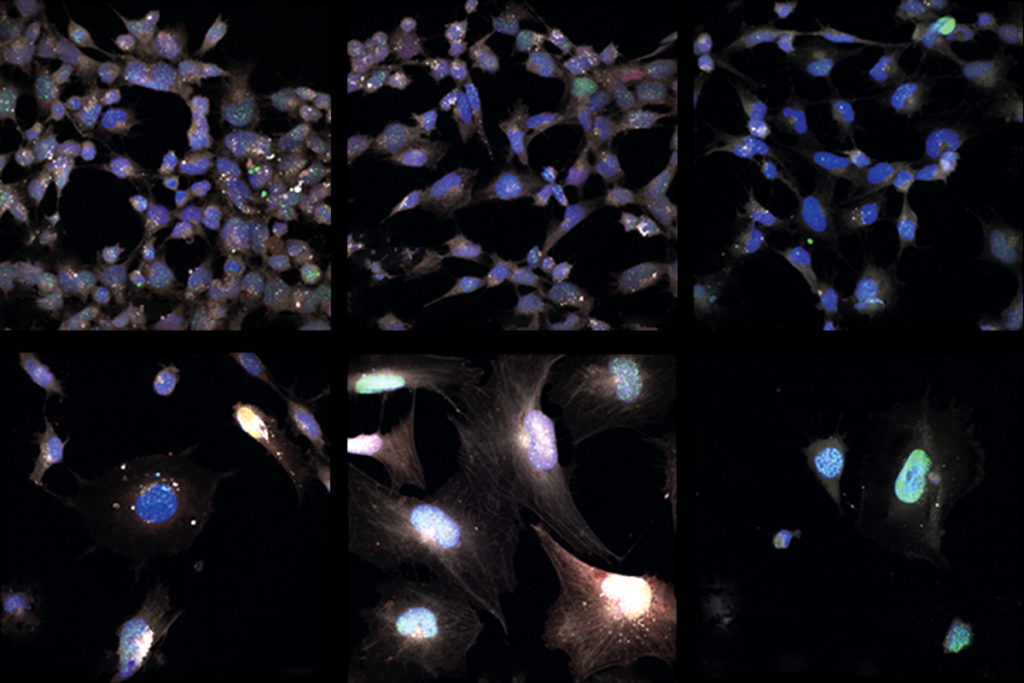

Exon-skipping approach boosts levels of key Rett syndrome protein

Deleting a small region of the MECP2 gene partially restored function in neurons derived from people with Rett-associated variants.

Exon-skipping approach boosts levels of key Rett syndrome protein

Deleting a small region of the MECP2 gene partially restored function in neurons derived from people with Rett-associated variants.

Frameshift: How Caitlin Vander Weele made science communication her business

Her favorite part of research was talking about it. So she left academia and turned that passion into a successful company.

Frameshift: How Caitlin Vander Weele made science communication her business

Her favorite part of research was talking about it. So she left academia and turned that passion into a successful company.

Signs of aging vary across brain cells

Senescence presents differently depending on the cell type, toxic trigger and neighboring cells, two new studies find.

Signs of aging vary across brain cells

Senescence presents differently depending on the cell type, toxic trigger and neighboring cells, two new studies find.