The brain effortlessly tackles countless variations of the same task. A person walking around a city, for example, can avoid a crowded sidewalk or stop at a traffic light, even as the surrounding scene changes from block to block.

This ability may come down to the geometry of the activity of neural populations, according to a new study. A single equation representing that geometry predicts how well neural networks, both biological and artificial, adapt to new but related tasks, the study found.

When researchers analyze the collective activity of a large population of neurons, that activity takes on a certain geometric structure, such as a donut-shaped torus. This neural geometry can link population activity to behavior, but most studies use it to simply describe neuronal activity, says SueYeon Chung, assistant professor of physics and applied mathematics at Harvard University and an investigator on the new paper.

Instead, “we wanted to come up with a unifying theory between the geometry of representation and our ability to generalize across tasks,” Chung says. “There are certain shapes and structures of those geometric activities that we can look for in real data, and those shapes will precisely predict the system’s ability to generalize.”

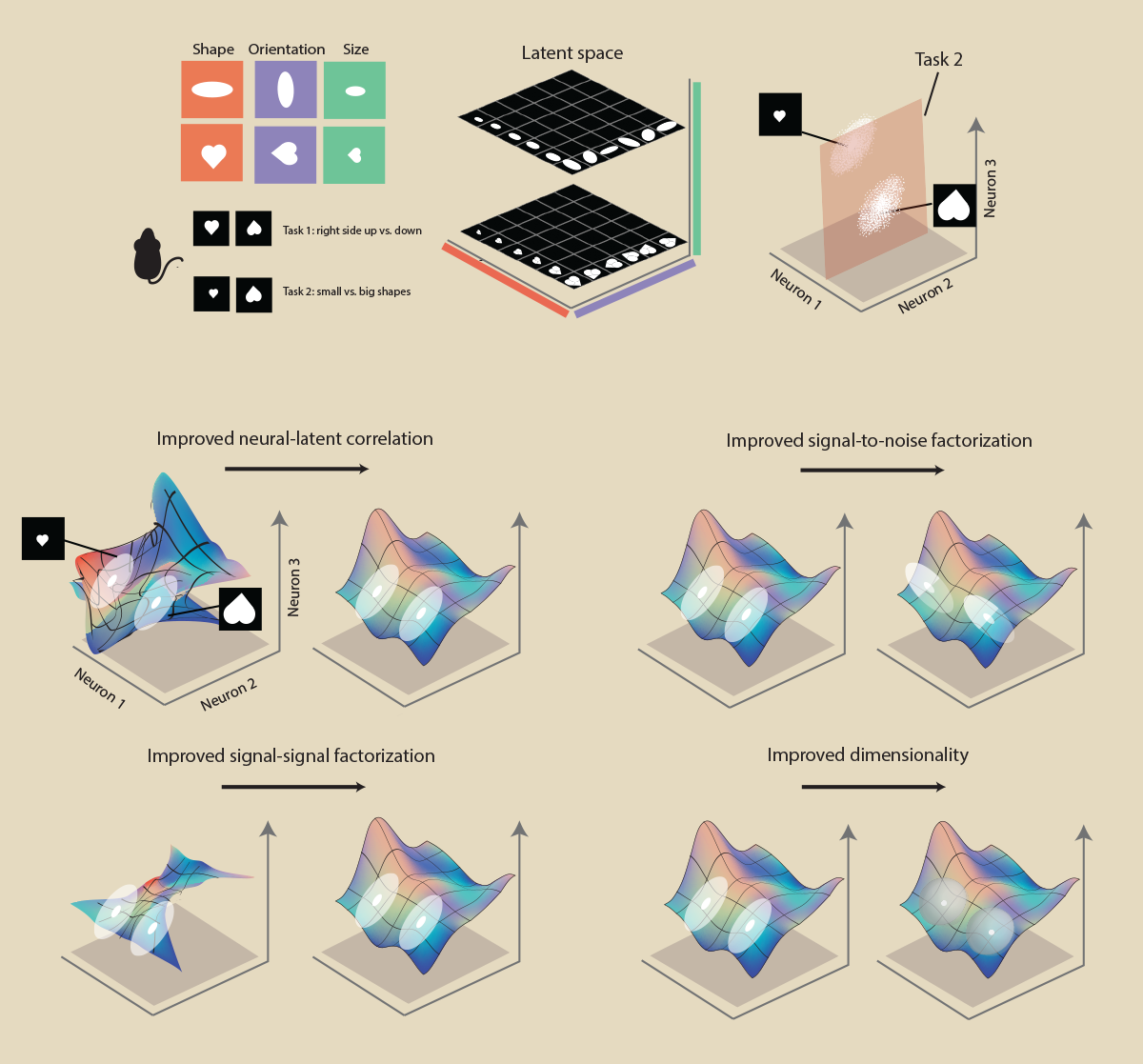

Four terms in the equation Chung and her colleagues derived represent distinct features of neural geometry and predict how well neural populations handle new tasks that draw on prior information, the study showed. Chung and her team applied this theory to neuronal data from rats, monkeys and artificial networks, showing that the four variables accurately predicted future behavior.

“A lot of people say that it’s very hard to put equations on neural activity, or that AI is a black box,” says Nina Miolane, assistant professor of electrical and computer engineering at the University of California, Santa Barbara, who was not involved in the work. But this study is endeavoring to “define the mathematical structure of intelligence, not focusing only on behavior, or the final output, but opening up the black box and having the courage to say, ‘We can put this into an equation.’”

U

Four terms in that error formula predicted the neural population’s ability to generalize: a higher correlation between neural activity and the task-related variables; a higher dimensionality of the neural responses; increased signal-to-noise factorization (meaning task-unrelated noise is kept separate from the information the neural activity is tracking); and increased signal-signal factorization (meaning the population encodes different task-related variables separately from one another).