Shifting neural code powers speech comprehension

Dynamic coding helps explain how the brain processes multiple features of speech—from the smallest units of sounds to full sentences—simultaneously.

The brain tracks many features of speech—from phonemes, the smallest units of speech sounds, to the meaning of full sentences—in parallel by way of distributed neural patterns that change over time, two new studies suggest.

This type of dynamic coding challenges previous speech-processing models that matched each feature with a single, brief neural response, says Frederic Theunissen, professor of neuroscience and integrative biology at the University of California, Berkeley, who was not involved with the new work.

“People think of neural representation as being kind of static,” Theunissen says. “You have this part of the brain that represents phonemes, and when your phonemes come in, you have a neural code for that phoneme,” and so on for other levels of language processing.

Because the neural responses to a language feature last longer than the feature itself, though, this one-to-one model creates a paradox: How does the brain maintain information about a language feature long enough to process sentences and extract meaning without those representations interfering with each other?

“How is it that you’re still processing the thing in the past while the stuff in the future is happening?” says Laura Gwilliams, assistant professor of psychology at Stanford University, an investigator on the new studies. “In a one-to-one model, that would be catastrophic.”

According to the hierarchical dynamical model proposed in one of the new studies, published in the Proceedings of the National Academy of Sciences in October 2025, the brain processes multiple features of speech at the same time without interference by moving information through different neural patterns. Similarly, the superior temporal gyrus, an area important for auditory processing, also encodes features of a word dynamically over time, according to the other new study, published in Neuron in November. This neural code enables the brain to track the timing of speech features, both studies show.

The findings also reveal that you can decode information about speech features a person is about to hear in a sentence before they actually hear them, supporting the idea of predictive coding in language, says Philip Resnik, professor of linguistics at the University of Maryland, who was not involved with the new studies.

“If you’re doing purely bottom-up processing, that’s very hard to explain,” Resnik says. “But if you believe that higher levels are constantly making predictions about lower levels, then this is actually very consistent with that idea.”

D

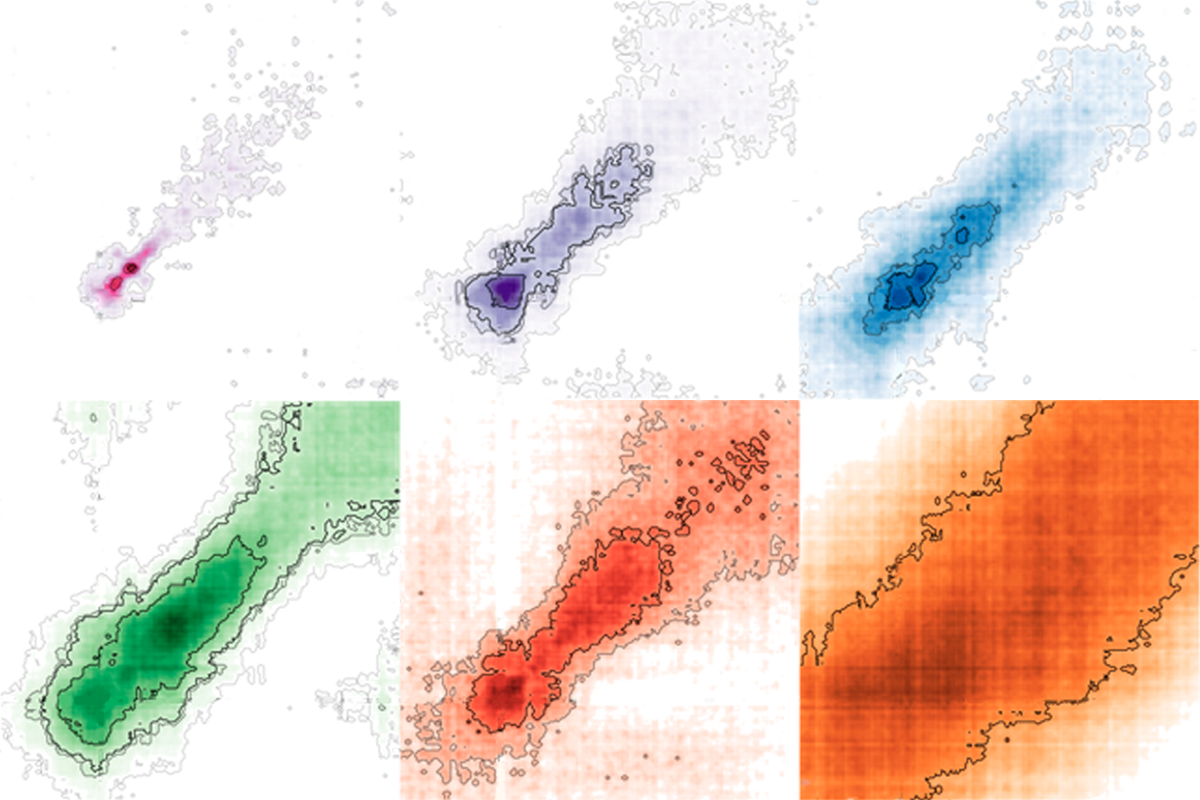

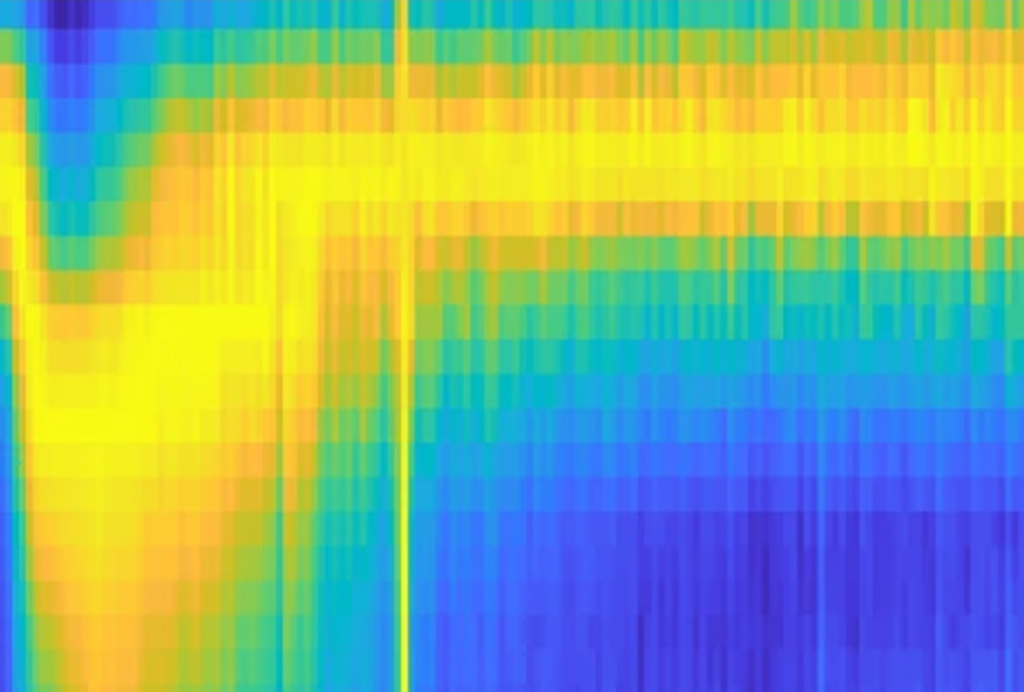

Each feature sets off sequences of neural configurations, the team found by using machine learning to decode magnetoencephalography activity in 21 participants listening to an audiobook. Because the neural configurations are ordered over time, each pattern contains a “time stamp” indicating when the feature began. With these sequences, the brain is “able to actually code the relative order of those words in a sentence so that you can then assemble them into the appropriate meaning,” Gwilliams says.

Neural signals for higher-level features appeared earlier and persisted longer than those for lower-level features, contradicting the one-to-one model. The brain can maintain information about the higher-level features while analyzing lower-level features as they continue to occur, allowing for the simultaneous integration of these signals across different levels of the hierarchy into meaning.

These dynamics in speech processing are “showing that things are more complex,” says Jonathan Simon, professor of electrical and computer engineering at the University of Maryland, who was not involved with the work. “It’s not just that [neural patterns are] more complicated but that there is an extension—that as you go higher up in the hierarchy, [the neural signals] go longer, and even, in this case, also earlier.”

Rather than the brain relying on discrete areas to encode unique features, as scientists previously thought, one brain area by itself can dynamically encode multiple features of a word through evolving neural activity, the November study shows. As the word is processed, different features of that word—including sounds, stress and word identity—become available in neural activity one after the other in the superior temporal gyrus, the researchers found using electrocorticography to measure that activity while participants listened to short news stories.

And neural activity scaled with word length, tracking how much of each word had elapsed, again providing a way to track time during speech, Gwilliams says.

Beyond language, dynamical coding is also at work in the visual system and in response to other auditory inputs such as musical tones, Gwilliams points out. “It makes me think that this is a type of canonical computation process in the brain,” she says. “It’s such a clever computational strategy.”

Explore more from The Transmitter

‘Wired for Words: The Neural Architecture of Language,’ an excerpt

Dispute erupts over universal cortical brain-wave claim