This paper changed my life: Appreciating John Hopfield’s brilliant neural network

In a 1982 paper, the Nobel laureate created his namesake recurrent neural network—work that taught Maria Geffen to always ground research questions in biology.

Answers have been edited for length and clarity.

What paper changed your life?

Neural networks and physical systems with emergent collective computational abilities. Hopfield J.J. Proceedings of the National Academy of Sciences (1982)

In this seminal paper, John Hopfield came up with an artificial neural network and showed that it could store memories.

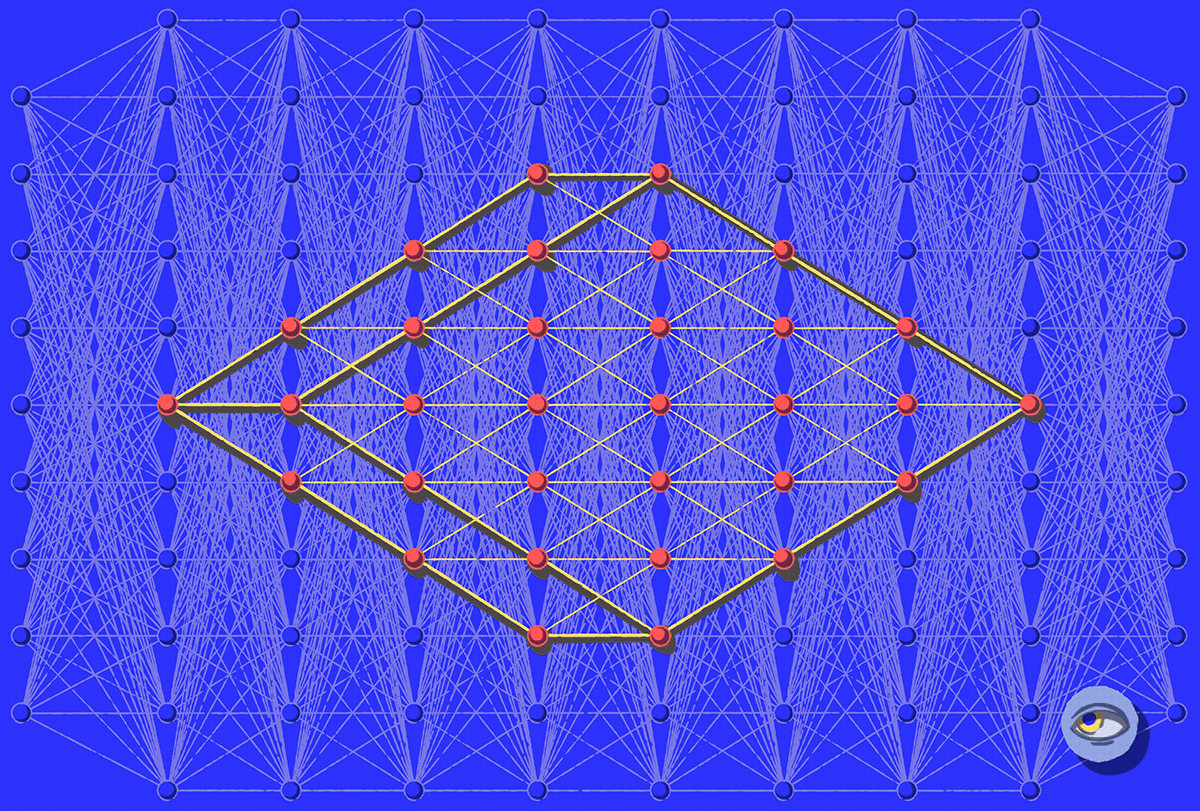

To understand brain functions, neuroscientists often abstract the neurons into simple computational units. Hopfield created an artificial neural network that really, really abstracted neurons into binary units and connected them with a certain weight matrix that used Hebbian principles—which means neurons that are active at the same time develop stronger connections. He found that his biology-inspired artificial network developed emergent properties, such as the capability to process inputs asynchronously and reach a stable attractor state, a self-sustaining pattern generation often used to model memory storage in computational neuroscience. He showed that the network could learn to store patterns (i.e., memories) and retrieve them even when given only partial input information.

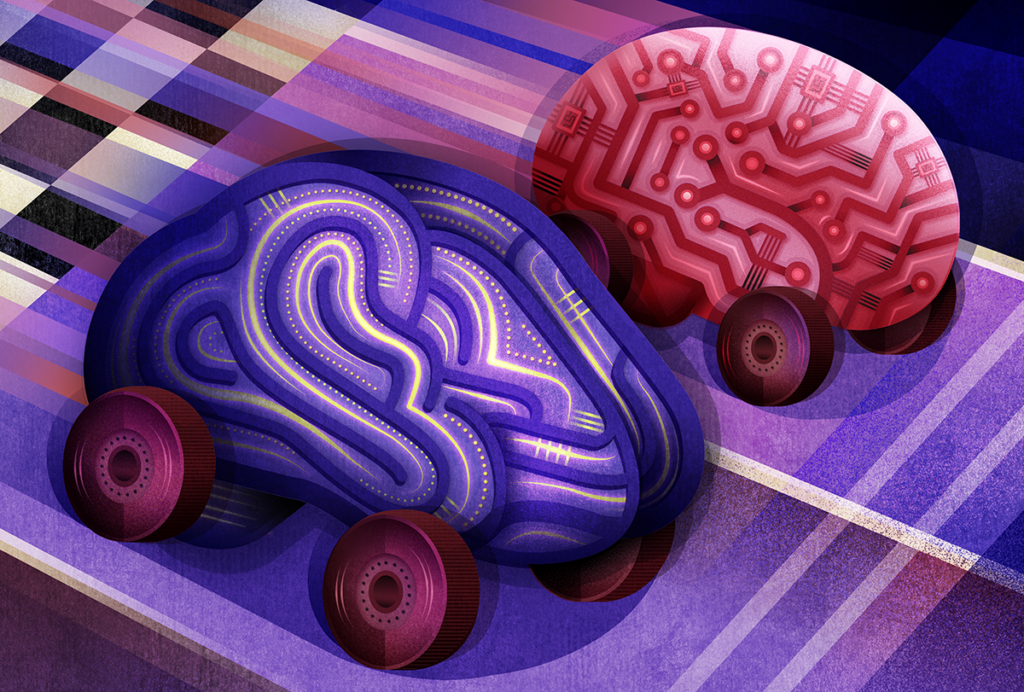

This simple system could do very, very complex computations. I love the elegance of the system, and I love how important it is for the field of computational neuroscience. This work was fundamental for the development of artificial neural networks and artificial intelligence and is partly the reason why Hopfield won the Nobel Prize in Physics in 2024. It’s incredibly interesting how such a short and relatively simple paper had such an influence on the field decades later.

When did you first encounter this paper?

When I was an undergraduate student at Princeton University, I was very interested in psychology, but at the same time, I was pretty good at math. One day, my undergraduate adviser, Clarence Schutt, suggested I check out Hopfield’s work because it might align with my interests. So that’s how I came across his papers. Then I had the privilege of taking a computational neuroscience course he taught and doing my undergraduate thesis with him. It really shaped the rest of my career as a computational and systems neuroscientist.

Why is this paper meaningful to you?

I think this was probably one of the first papers that I read in computational neuroscience. Because of that, I didn’t really have anything to compare it with, but most of my research interests since then really rely on this paper.

What I loved about it is that it uses language from statistical physics and dynamical systems to understand fundamental properties of the brain. Neuronal networks are defined by how neurons are connected to each other. Once you’ve defined the connectivity matrix of a network, this enables you to ask questions about its properties. How do networks optimally update? How do they deal with multiple inputs? What if you have multi-layer networks? All of these questions start off with the basics that are laid out in this paper.

How did this research change how you think about neuroscience and influence your scientific trajectory?

Hopfield was interested in this idea of attractors, these stable points of sustained activity in a system, which are modeled in his neural network. So he wanted to know how neurons in the brain were able to have a kind of activity that’s just continuously propagating. During my undergraduate thesis, he had me look through literature to find examples of this, and we found one in the rat whisker cortex. We wanted to understand if sustained neuronal responses to whisker stimulation in the somatosensory cortex were due to their mechanical properties. So I measured the length, thickness and curvature of different whiskers and estimated their elastic coefficients. I realized that their geometry is very important for texture perception in rodents, which had largely been overlooked in the literature. I ended up doing my thesis studying the different resonance frequencies that whiskers could detect. I continued working on that for a couple of years with Christopher Moore and Mark Anderman, doing some really cool experiments measuring neuronal properties of whisker responses.

Since then, I’ve continued studying how ecologically meaningful stimuli give rise to complex network activity. In my postdoctoral work, I studied fractal properties of sounds and how different complex sounds are represented in the auditory system, working with James Hudspeth and Marcelo Magnasco. And now in my lab, I’m focused on auditory processing but also trying to link all the senses to understand how complex networks in the brain process and encode information; we’re studying auditory-olfactory integration, auditory-visual integration and auditory somatosensory integration.

Is there an underappreciated aspect of this paper you think other neuroscientists should know about?

There were two radical ideas that kind of got pulled together: One was the idea that this network could update asynchronously, and the other was that you can reconstruct memories from a partial input. I love that, because those concepts are rooted in biology.

Human brains try to complete memories or perform associations based on partial inputs every day. For instance, someone could say, “Bad Bunny,” and your brain will think, “Puerto Rico.” Grounding these concepts in biology is a really fundamental aspect of this paper. I saw Hopfield do it firsthand when we were working on my thesis project. He really wanted to understand how biological systems work. I took that with me for the remainder of my research as kind of a core principle.