Ariel Davis

Illustrator

From this contributor

New questions around motor neurons and plasticity

A researcher’s theory hangs muscle degeneration on a broken neural circuit.

New questions around motor neurons and plasticity

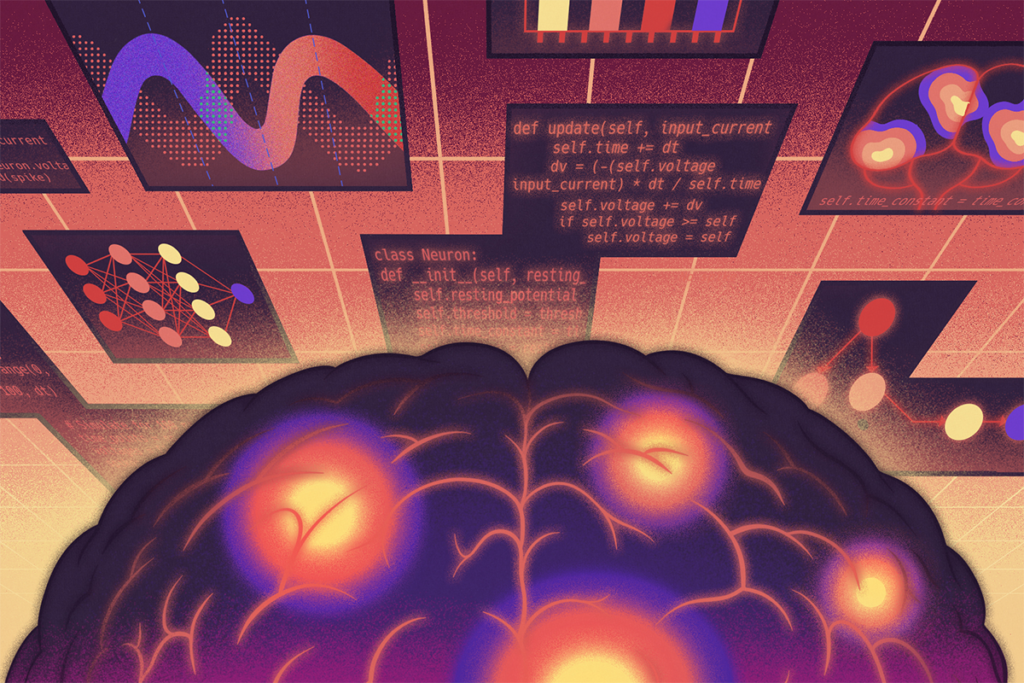

Should neuroscientists ‘vibe code’?

Researchers are developing software entirely through natural language conversations with advanced large language models. The trend is transforming how research gets done—but it also presents new challenges for evaluating the outcomes.

Should neuroscientists ‘vibe code’?

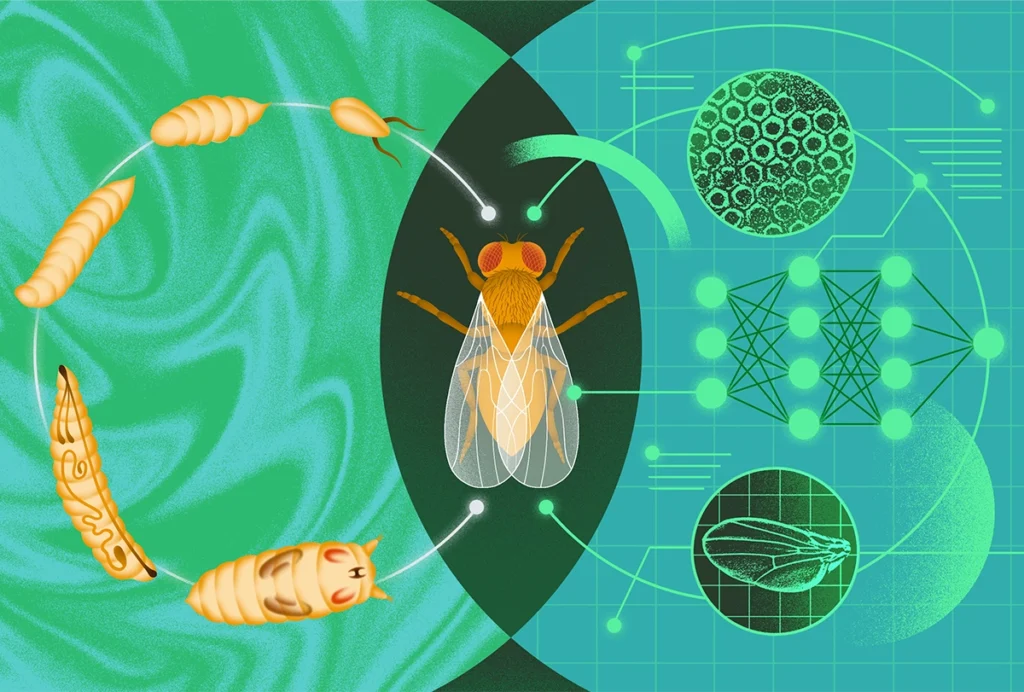

Computational and systems neuroscience needs development

Embracing recent advances in developmental biology can drive a new wave of innovation.

Computational and systems neuroscience needs development

Experimentalists versus modelers — whose work has more lasting impact?

My informal analysis of some of neuroscience’s most cited papers from 1999 explores what drives scientific durability.

Experimentalists versus modelers — whose work has more lasting impact?

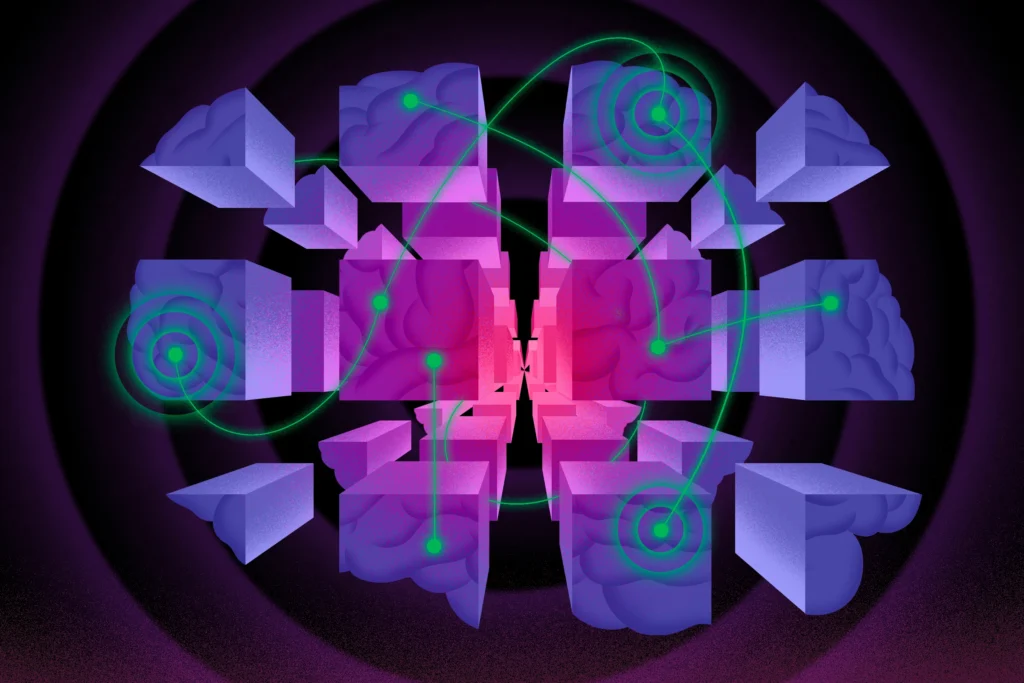

Name this network: Addressing huge inconsistencies across studies

Entrenched practices have stymied efforts to build a universal taxonomy of functional brain networks. But a new tool to standardize brain-imaging findings could bring us a step closer.

Name this network: Addressing huge inconsistencies across studies

Explore more from The Transmitter

Four protein synthesis pioneers win Kavli Prize in Neuroscience

Their research revealed how neurons synthesize proteins in previously unrecognized places.

Four protein synthesis pioneers win Kavli Prize in Neuroscience

Their research revealed how neurons synthesize proteins in previously unrecognized places.

How to incorporate open-science practices into neuroscience training

If we want emerging neuroscientists to implement open science throughout their careers, we need to establish its practices as a core principle of training.

How to incorporate open-science practices into neuroscience training

If we want emerging neuroscientists to implement open science throughout their careers, we need to establish its practices as a core principle of training.

A new atlas of abstracts visualizes the field of human brain mapping—where does your work fit?

Satrajit Ghosh talks to Mac Shine about a community-built tool that places every abstract from the 2026 Organization for Human Brain Mapping meeting inside a semantic map of the broader neuroscience literature. Finding your neighbors in that space might matter more than you think.

A new atlas of abstracts visualizes the field of human brain mapping—where does your work fit?

Satrajit Ghosh talks to Mac Shine about a community-built tool that places every abstract from the 2026 Organization for Human Brain Mapping meeting inside a semantic map of the broader neuroscience literature. Finding your neighbors in that space might matter more than you think.