Switching neural code may solve ongoing face-recognition debate

Face patch cells in macaque monkeys initially respond to images of any object but rapidly transition to attend to faces exclusively, a new study finds.

Face-selective neurons in the macaque visual cortex dynamically change their tuning properties, according to a study published last month in Nature. The findings suggest that artificial neural networks—which are stably tuned—may not fully model visual systems in primates.

Neurons in the inferotemporal cortex initially respond to both faces and inanimate objects, the study found. But then those cells switch to respond to specific facial features, including inter-eye distance and hair color. That switch likely corresponds to a shift from the brain recognizing the presence of a face to gauging what that face looks like, according to the study investigators.

“[This shift] is a phenomenon that has never been characterized before,” says Laura Gwilliams, assistant professor of psychology at Stanford University, who was not involved in the work. “We need to rethink how neurons code information.”

Conflicting evidence has fueled a debate in face perception research: On the one hand, the visual cortex contains clusters of highly selective neurons—known as face patches—indicating that the region uses specialized mechanisms for face processing. And stimulating face-selective brain areas in primates, including humans, distorts the perception of faces but not of non-face objects. Yet, other studies suggest that the neurons employ a general code to respond to a range of visual features.

The new paper “offers a potential solution to solve this controversy,” says Shahab Bakhtiari, assistant professor of psychology at the Université de Montréal, who did not take part in the study. “The two theories were looking at the same thing but at different [timepoints].”

Neurons in the inferotemporal cortex initially use a general code, then switch to a face-specific code in a matter of milliseconds, the new study found. By contrast, convolutional neural networks—image-processing algorithms that attempt to model visual pathways—use a nonspecific approach for facial recognition.

“I think it’s very interesting to find that, actually, the brain is not doing a simple convolutional neural network—it is doing something much more interesting,” says study investigator Doris Tsao, professor of neuroscience at the University of California, Berkeley, and chief scientist of Astera Neuro. A code that changes over time “could provide a new model for AI systems,” she says.

I

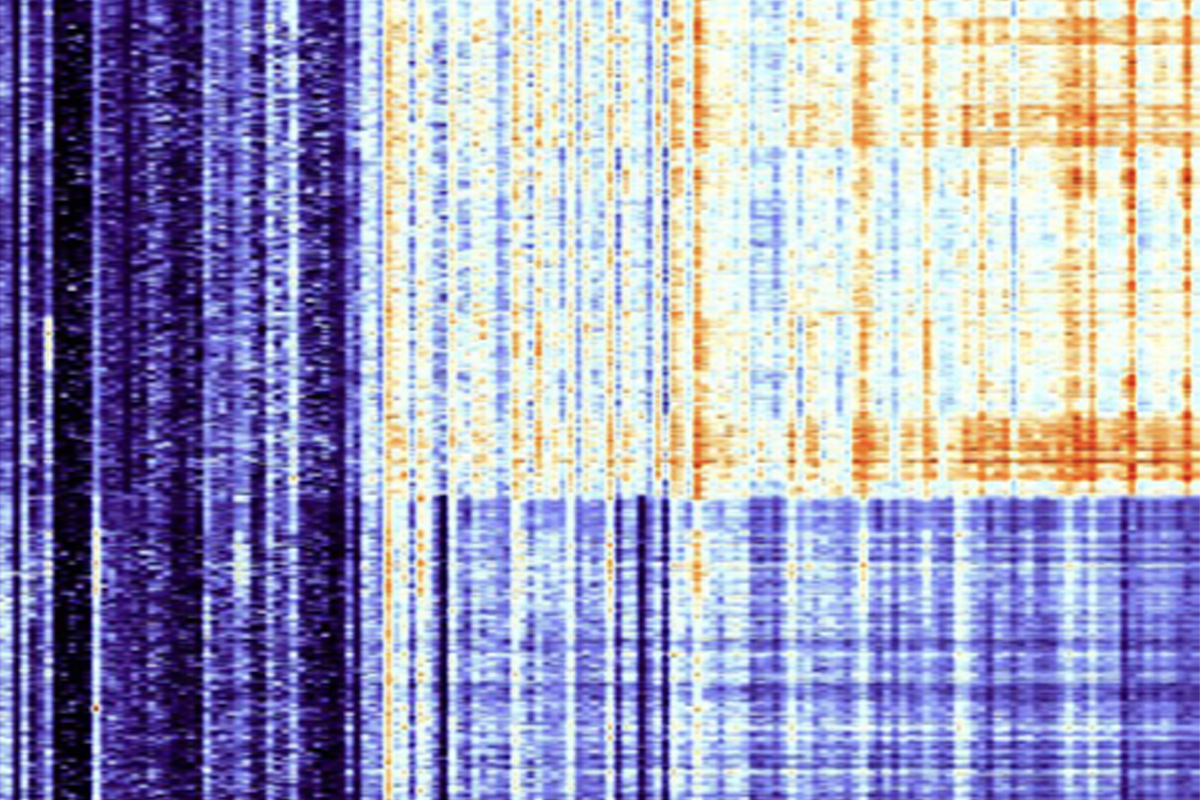

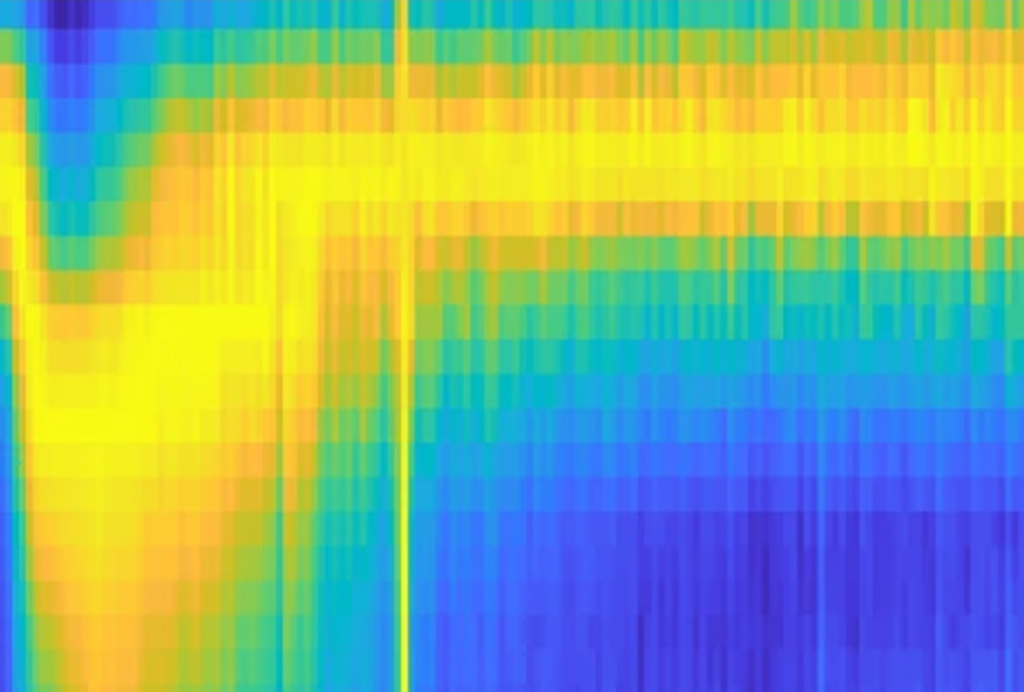

When macaques see a face, the face patch neurons immediately respond to broad features that are not unique to faces, such as roundness, the recordings revealed. After 100 milliseconds, however, those cells make a concerted switch to become less responsive to general features and more attuned to face-specific ones.

That suppression of low-level features at later timepoints might “reduce the initial high firing rate of face cells to save bandwidth, allowing neurons to start coding differences among high-level features,” says study investigator Yuelin Shi, a graduate student in Tsao’s former lab at Caltech. “It seems like a very efficient, energy-saving way of coding.”

The coding change didn’t occur when the macaques were shown an image of a non-face object, suggesting that a face is needed to prompt the switch. Identifying that process—known as stimulus gating—is clearly an advance in our understanding of vision networks, says Johannes Fahrenfort, assistant professor of cognitive psychology at the Vrije Universiteit Amsterdam, who was not involved in the work.

But whether the coding switch really represents a shift from detection to discrimination is unclear, Fahrenfort says. “One could also interpret it as a change in perceptual organization or in conscious experience.”

Those interpretations aren’t mutually exclusive and could “describe the same phenomenon at different levels,” Shi says. Though future work could determine whether coding shifts correspond to changes in consciousness, the group’s current findings strongly point to a transition from face detection to face discrimination, she adds.

For instance, the brain appears to be better at discriminating faces after the tuning shift: Reconstructions of faces based on neuronal responses recorded after the switch included more identity-specific information than those based on earlier recordings, the study found.

The findings align with evidence that other sensory processes—including speech processing—involve dynamic coding changes. Those studies confirm that strict definitions of neuronal tuning—in which cells show a fixed response to select stimuli—ought to be abandoned, says Grace Lindsay, assistant professor of psychology and neural science at New York University, who was not involved in the work. “These are complex dynamic systems, and the relationship between the neural response and stimulus is not going to be scaling along a single dimension.”

Tsao and her team are now investigating whether local circuits or top-down processing drive the coding change, she says, adding that initial findings point to the former, but they “don’t have clinching evidence.” Another direction Shi says they hope to explore is determining how changes in tuning properties affect a monkey’s experience. Rather than reconstructing images based on neuronal responses, she says, they can train macaques to report when they spot a face by pointing to its key features.

That way, the group can investigate whether tuning switches occur when people mistakenly see a face in a piece of burnt toast or an electrical outlet, Tsao says. “The very strong prediction here is that when you see it as a face, this code change will happen.”

Explore more from The Transmitter

Dispute erupts over universal cortical brain-wave claim