Like many neuroscientists in training, I “learned how to code” by adapting someone else’s scripts for my own experiments. In retrospect, I spent an alarmingly large chunk of graduate school trying to reverse engineer MATLAB code, loosely guided by the internet and my patient labmates.

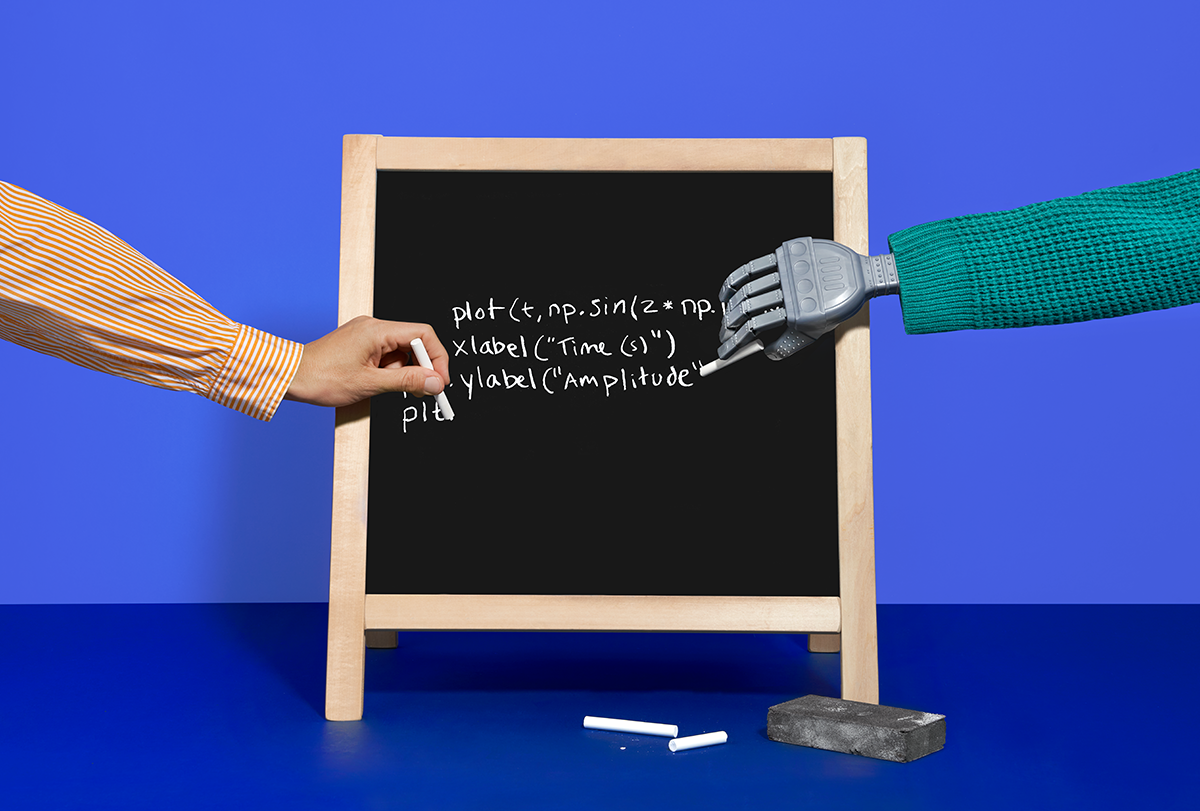

So yeah, I’ll admit that I’m jealous that new programmers now have artificial-intelligence agents that can not only debug their code but also teach them how to write it. But then again, there’s a part of me that is glad to have learned how to code without the temptation of AI. The question, then, is: To what extent are those hours of struggle necessary for developing the kind of code comprehension that neuroscientists need to use AI tools smartly?

The short answer is that we don’t really know. The longer answer is that I’m trying to find out, in a way that not only leverages AI tools for personalized education but also builds classroom community and includes student voices. Amid the widespread and legitimate fears about students using AI to shortcut their learning, there are also opportunities, especially when it comes to teaching neuroscience students how to code.

A

I is changing education, but not uniformly. Just as we adapted to the onset of technologies such as calculators and computers, we are now adjusting to the presence of AI tools.Even within computer science education, the conversation about AI is highly variable. Some computer science educators have argued that the harms of AI outweigh the benefits, whereas others, including my colleague Leo Porter, are teaching alongside it in their classes. It’s hard to say what the overall pulse is, but most educators acknowledge that students still need to be able to design and debug code, even if they’re not writing it de novo.

A few years ago, I designed a programming class for biology students. When I started teaching the class in 2022, a few students were experimenting with AI. Now almost every student is using it in one way or another, though they too wonder how they should.

Despite the tenor of most conversations about student AI use, they are not all using it to cheat. Many students are scared to miss out on AI as a tool but don’t want to violate academic integrity—I once had to convince a student who was failing a programming class to at least try using AI as a tutor. Our most vulnerable students are less likely to use AI for fear of cheating, and women use it less than men. Other students are diving headfirst into AI without knowing anything about prompt engineering. Regardless of how we personally feel about using AI, we can’t ignore these growing divides and the possibility that they could perpetuate ongoing inequities in education.

Earlier this year, I opened a conversation about AI-assisted coding in my course. Inspired by an activity I had seen elsewhere, I showed students seven possible levels of AI use, ranging from asking conceptual questions to having it to write full programs. I asked students to first discuss what they felt was appropriate for our different course elements and then log their answer on an anonymous live survey. After seeing their answers, I told them what I felt was appropriate.

The 90 or so students in class that day answered along the entire spectrum, but on average, they were fairly well-aligned with me. We agreed that they shouldn’t ask AI to write the code for their weekly coding assignments. When it came to final projects, I was more comfortable with AI than they were, on average, given that at that point in the class, they should know enough to use it to generate small code snippets and write tests for their code.

Student self-reporting may not always match their actual behavior, but that’s not the point. This wasn’t an exercise intended to make students feel bad the next time they use AI. It was intended to demonstrate that they are expected to play a role in their own educational journey, to ensure that we are starting from a shared level of AI literacy and to spark shared, explicit reflection about our classroom norms.

Of course, I’m fully aware that some students may be using AI to complete assignments or even the entirety of their final projects. In response to this, I have added more in-person paper exams in which students predict outputs, debug code or trace code snippets. On our final exam, students were also asked to reflect on choices they made in their projects, an attempt to ensure that they didn’t generate a bunch of code that they don’t understand. The age of AI has brought on changes in my course that enable me to assess students on more complex cognitive tasks, asking them to predict, appraise and revise, rather than simply remember.

O

ur rules about AI should be tailored to specific contexts, and my guidelines are different for each course. In my neurobiology laboratory class, where students are expected to analyze data and produce full lab reports, AI is OK for writing code but not for writing reports. I firmly believe that writing and thinking are fundamentally intertwined—for this reason, I also don’t use AI for any of my own writing.Learning objectives and the student population in a course are also key considerations. My programming course isn’t designed to train software engineers—though some of my students have gone on to be developers at places such as Microsoft—it’s designed for students who are curious to know whether they will like programming and would like to add it to their skill set. Students often enter the class fearful of programming; after the class, some of them experiment with code for personal projects, other coursework or their research. If more students continue coding after my class, I will have succeeded as an educator.

Because I’m teaching programming to students who aren’t computer science majors, I think quite a bit about encouraging them to persist in developing these skill sets. We know from decades of research that there continue to be significant gender and socioeconomic disparities in who codes, and I don’t want to replicate those disparities in my class. So in addition to asking myself, “What will help students learn?” I’m also asking, “What will encourage them to persist?”

In my ideal world, we would spend our precious time together in class thinking critically about the choices we make in our code, not describing when we should use colons or semicolons. For most of programming education’s history, the colons have gotten in the way, and a few flashes of red—an indicator of a coding error—on a student’s screen could be enough to reinforce feelings that they don’t belong. In addition to normalizing errors and providing a supportive classroom community, AI might provide a bridge for students that would otherwise opt out. At the same time, I worry that these same students will perceive engagement with AI as an illegitimate form of learning, rather than a viable way to improve skills and get feedback, further emphasizing the importance of establishing classroom norms around its use.

Last week, I wrote a Python script to help students in my laboratory class analyze data they had collected for their final project. After looking at the code, a student approached me with what she called a “personal question”: “Do you use AI for coding?”

I do, I told her without hesitation. I turned my laptop toward her and showed her how I had AI tools integrated into my coding environment. I told her a bit about some of the back and forth that was necessary to get our code to do what we needed—the initial draft after my first prompt wasn’t quite right, so some iteration was necessary. I told her that I had one idea for how to implement our analysis, but AI had suggested alternatives based on a literature search, and now we could run the script and compare their outcomes.

We shouldn’t hold onto habits, including ways of training our students, simply because they have become a rite of passage. If the goal is training students to be good scientists, we need to think carefully about what skills are now needed to be a good scientist. The necessary skills aren’t recognizing syntax; instead, students should be able to design computational workflows, evaluate their outputs and, perhaps most importantly, collaborate on decisions about how we proceed into this next stage of scientific discovery.